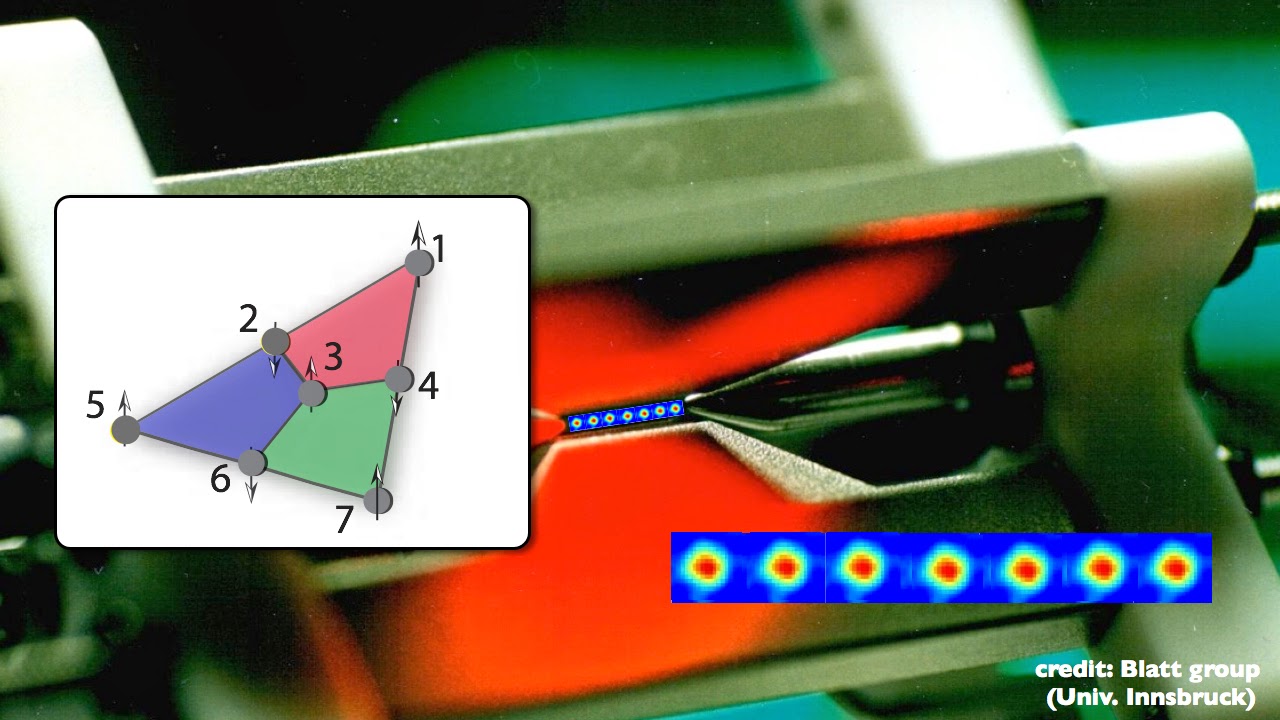

They showed how physicists can spread a single qubit’s worth of information over multiple physical qubits, to build reliable quantum computers out of unreliable components. The game changed in 1995, when Peter Shor of Bell Labs and, independently, Andrew Steane of the University of Oxford developed quantum error correction. Faced with the huge gap between theory and practice, physicists in the early days worried that quantum computing would remain a scientific curiosity. Yet today’s best machines make an error every 1,000 gates. That feat demands they make at most a single error every billion gates. The trouble is that many proposals to solve useful problems require quantum computers to perform billions of logical operations, or “gates,” on hundreds to thousands of qubits. These capabilities give quantum computers their power to perform certain functions extremely efficiently and potentially speed up a wide range of applications: simulating nature, investigating and engineering new materials, uncovering hidden features in data to improve machine learning, or finding more energy-efficient catalysts for industrial chemical processes. Because qubit states are in the form of waves, they can interfere, just as light waves do, leading to a much richer landscape for computation than just flipping bits. By combining qubits through a quantum phenomenon called entanglement, we can store vast amounts of information collectively, much more than the same number of ordinary computer bits can. There I devoted myself to improving operations among multiple linked qubits and exploring how to correct errors. I helped run several quantum-computing research programs at IARPA and later joined IBM. My experience with superconductivity was suddenly in demand. Another decade later, in 2007, they invented the basic data unit that underlies the quantum computers of IBM, Google and others, known as the superconducting transmon qubit. Although Paul Benioff of Argonne National Laboratories had proposed them in 1980, it took physicists nearly two decades to build the first one.

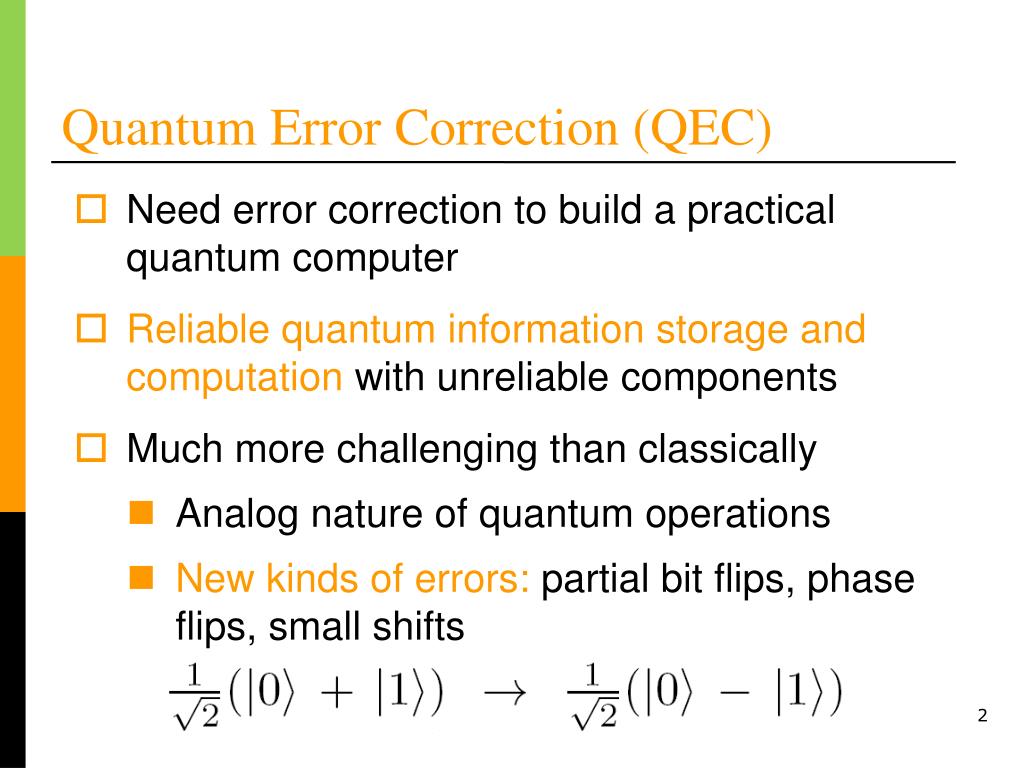

Quantum computers were in their earliest stages then. There I sought to employ the fundamentals of nature to develop new technology. Department of State, which next led me to the Defense Advanced Research Projects Agency (DARPA) and the Intelligence Advanced Research Projects Activity (IARPA). That came later when I took a hiatus to work on science policy at the U.S. I began as a condensed-matter theorist investigating materials’ quantum-mechanical behavior, such as superconductivity at the time I was oblivious to how that would eventually lead me to quantum computation. I am a physicist working in quantum computing at IBM, but my career didn’t start there. Quantum computers suffer types of errors that are unknown to classical computers and that our standard correction techniques cannot fix. But with great power comes great vulnerability. The implications for science and business could be profound. These emerging machines exploit the fundamental rules of physics to solve problems that classical computers find intractable. This law of inevitability applies equally to quantum computers. Errors may be inevitable, but they are also fixable.

Error correction is one of the most fundamental concepts in information technology. Images from space probes can travel hundreds of millions of miles yet still look crisp. QR codes can be blurred or torn yet are still readable. Mitigating and correcting them keeps society running. They are everywhere: in language, cooking, communication, image processing and, of course, computation. It is a law of physics that everything that is not prohibited is mandatory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed